Machine-learning models can carry on with similar biases that human decision-makers tend to accumulate over time

Machine-learning models can carry on with similar biases that human decision-makers tend to accumulate over time

By Kiran N. Kumar

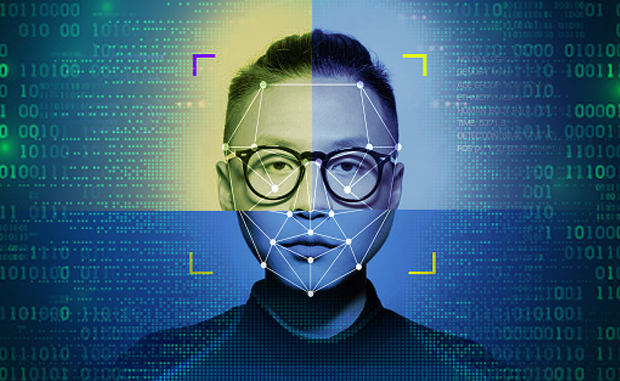

An MIT study published in March 2022 showed that a machine-learning model, even trained using an unbalanced dataset, can reach unfair predictions, especially if the dataset contains far more images of people with lighter skin than people with darker skin.

MIT researchers have also found that machine-learning models that are popular for image recognition tasks actually encode bias when trained on unbalanced data and this bias persists and is impossible to fix later on, even when re-training the model with a balanced dataset.

So, the researchers came up with a technique to introduce fairness directly into the model’s internal representation to produce fair outputs even if it is trained on unfair data. It reduced the bias in downstream tasks like facial recognition and animal species classification.

Read: GoodWill ransomware dons Robinhood hat (June 1, 2022)

“In machine learning, it is common to blame the data for bias in models. But we don’t always have balanced data. So, we need to come up with methods that actually fix the problem,” says lead author Natalie Dullerud, a graduate student in the Healthy Machine Learning (ML) Group of the Computer Science and Artificial Intelligence Laboratory (CSAIL) at MIT.

Using the example of facial recognition, the metric will be unfair if it is more likely to embed individuals with darker-skinned faces closer to each other even if they are not related or the same person. The gap is not similar when it was dealing with people with lighter-skinned faces.

“This is quite scary because it is a very common practice for companies to release these embedding models and then people fine tune them for some downstream classification task,” Dullerud says. He emphasizes that even if a user retrains the model on a balanced dataset, there are still performance gaps of at least 20%.

Another MIT study found recently that the explanation methods in machine-learning models can be less accurate and carry on with similar biases that human decision-makers tend to accumulate over a period of time.

They found many ML models, used in safety-critical settings like healthcare, college admissions, and justice system, have shown that human decisions can become more accurate when assisted by such models.

But these two major MIT studies have questioned the use of explanation methods and whether to trust machine-learning model predictions for accuracy, especially for disadvantaged subgroups.

When MIT researchers took a hard look at the fairness of some widely used explanation methods, they found that the approximation quality can vary dramatically between subgroups and that the quality is often significantly lower for minoritized subgroups.

It means that if the approximation quality is lower for female applicants, the model’s predictions could lead a law college admissions officer to wrongly reject more women than men.

Read: Metaverse: Sony embraces virtual world, others are not far behind (May 19, 2022)

Once the MIT researchers saw how pervasive these fairness gaps are, they tried several techniques to level the playing field. They were able to shrink some gaps, but couldn’t eradicate them.

“What this means in the real-world is that people might incorrectly trust predictions more for some subgroups than for others,” says lead author of the study, [https://arxiv.org/pdf/2205.03295.pdf] Aparna Balagopalan, a graduate student in the Healthy ML group of the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL).

“So, improving explanation models is important, but communicating the details of these models to end users is equally important,” she says. “These gaps exist, so users may want to adjust their expectations as to what they are getting when they use these explanations,”

But the researchers found clear fidelity gaps for all datasets and explanation models. The fidelity for disadvantaged groups was often much lower, up to 21% in some instances.

The law school dataset had a fidelity gap of 7% between race subgroups. If there are 10,000 applicants from these subgroups in the dataset, for example, a significant portion could be wrongly rejected, Balagopalan explains.

Co-author of the study Marzyeh Ghassemi, an assistant professor and head of the Healthy ML Group emphasized the anomaly saying, “I was surprised by how pervasive these fidelity gaps are in all the datasets we evaluated.”

“It is hard to overemphasize how commonly explanations are used as a ‘fix’ for black-box machine-learning models. In this paper, we are showing that the explanation methods themselves are imperfect approximations that may be worse for some subgroups.”

Read: Biases in Machine Learning (June 26, 2020)

After identifying fidelity gaps, the researchers trained the explanation models to identify regions of a dataset that could be prone to low fidelity. The re-training strategies did reduce some fidelity gaps but they didn’t eliminate them entirely, found the researchers.

When they further probed, they found to their surprise that an explanation model might indirectly use protected group information, like sex or race, that it could learn from the dataset, even if group labels are hidden.

As more such studies are coming into light, Balagopalan has some words of warning for machine-learning users. “Choose the explanation model carefully. But even more importantly, think carefully about the goals of using an explanation model and who it eventually affects,” she says.