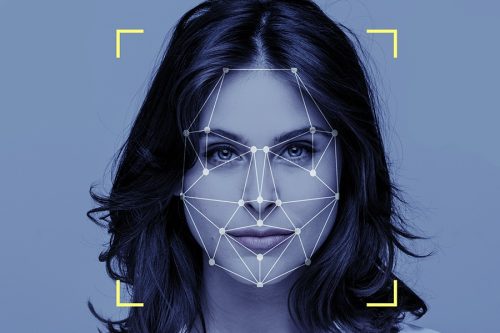

FR technology has become the centre-stage of a wide range of racial, ethnic, and gender biases and raised racial controversy

FR technology has become the centre-stage of a wide range of racial, ethnic, and gender biases and raised racial controversy

Finally, Microsoft has joined Facebook and announced to retire its AI-powered facial analysis tools, including those to infer emotional state and identity gender, age, smile, facial hair, hair and makeup.

So far, Microsoft had allowed access to its open-ended API technology that can scan people’s faces and help infer their emotional state based on their facial expressions or movements.

The tech giant said it will stop offering these features to new customers from June 21, while existing customers will have their access revoked on June 30, 2023.

Read: Biometric facial comparison technology introduced at all US airports (June 7, 2022)

It further added that the decision has been taken as part of Microsoft’s ‘Responsible AI Standard’, a framework to guide how it builds AI systems.

“AI is becoming more and more a part of our lives, and yet, our laws are lagging behind. They have not caught up with AI’s unique risks or society’s needs,” said Natasha Crampton, chief responsible AI officer at Microsoft.

“While we see signs that government action on AI is expanding, we also recognize our responsibility to act. We believe that we need to work towards ensuring AI systems are responsible by design,” she said in a statement.

Row over facial recognition technology

In fact, Microsoft is not the first to discard this system as Meta had already announced that it would shut down Facebook facial recognition system, deleting the face scan data of more than one million users.

Unlike ‘Face Detection’, which helps in matching a detected face against a database of faces, ‘Facial Recognition’ goes beyond to add value to surroundings and bringing up more details about the person and his location based on images and videos.

Although the accuracy of facial recognition as a biometric tool is lower than iris recognition and fingerprint recognition, it was widely deployed by several governments and private companies in advanced human–computer interaction, video surveillance and automatic indexing of images due to its advantages of contactless feature, especially during the pandemic.

However, facial recognition (FR) technology has become the centre-stage of a wide range of racial, ethnic, and gender biases. It has raised racial controversy with concerns such as violating citizens’ privacy, making incorrect identifications, encouraging gender norms and being used in racial profiling.

Read: Black or White? Bias persists in machine learning models too (June 2, 2022)

Here are some bizarre findings on FR:

- A research paper by University of Colorado showed that facial recognition software from Amazon, Clarifai, Microsoft, and others was 95% accurate for cisgender men but often misidentified trans people.

- Another study by researchers at the University of Maryland found that face detection services from Amazon, Microsoft, and Google remain flawed and more likely to fail with older, darker-skinned people compared with their younger, ‘whiter’ counterparts.

- FR tends to favor “feminine-presenting†people while discriminating against certain physical appearances.

- In the University of Maryland study, the researchers tested the services offered by Amazon, Microsoft, and Google, using over five million images with artificially added artifacts like blur, noise, and weather. They found visible bias that was higher in many results.

- Amazon’s face detection API was found 145% more likely to make a face detection error for the oldest people.

- Amazon API tends to make fewer errors with feminine facial features in images than masculine people.

- The overall error rate for lighter and darker skin types was 8.5% and 9.7%, respectively — a 15% increase for the darker skin type.

- Dim lighting in particular worsened the detection error rate for some demographics.

“We found that older individuals, masculine presenting individuals, those with darker skin types, or in photos with dim ambient light all have higher errors ranging from 20-60% … Gender estimation is more than twice as bad on corrupted images as it is on clean images; age estimation is 40% worse on corrupted images,†coauthors of the Maryland study wrote.

A study conducted by researchers at the University of Virginia found that two prominent research-image collections displayed gender bias.

Another collection of 80 million tiny Images, was found to have a range of racist, sexist, and otherwise offensive annotations. Nearly 2,000 images were labeled with the N-word, and labels like “rape suspect†and “child molester.â€

In a bizarre complaint in 2015, a software engineer pointed out that the image recognition algorithms in Google Photos were labeling his Black friends as “gorillas.â€

Same algorithm has shown that Google’s Cloud Vision API had labeled thermometers held by a Black person as “gunsâ€Â while thermometers held by a light-skinned person as “electronic devices.â€

Read: Facebook to shut down facial recognition system (October 3, 2021)

Amazon, Microsoft, and Google in 2019 had largely discontinued the sale of facial recognition services but have so far declined to impose a moratorium on their data or products.

Tracy Pizzo Frey, managing director of responsible AI at Google Cloud, says the FR system has its limitations but the bias detection remains “a very active area of research†at Google “as these help us improve our API.â€